This is a prerelease version of this book. Feel free to check if out! I would greatly appreciate it if you left me some feedback. If something is wrong, feel free to leave a merge request on the repository.

Rust Project Primer

A Practical Guide on how to Structure and Maintain your Rust Projects

by

Patrick M. Elsen

CC BY-NC-SA 4.0 Licensed

Preface

This book exists because learning Rust and learning how to run a Rust project are two different things. There are excellent resources for the language itself: The Rust Book, Rust by Example, to name just a few. But once you know the syntax and the borrow checker, you still need to figure out how to structure a codebase, set up CI, manage dependencies across a workspace, write tests that actually catch bugs, and ship the result to users. There are established patterns in the Rust community, but that knowledge is scattered across blog posts, README files, and tribal experience. I wrote this book to bring it together in one place.

The book assumes you already know Rust. It is not a language tutorial. Instead, it focuses on the practical side of running a Rust project: how to organize code, which tools to use for formatting, linting, testing, and benchmarking, how to set up continuous integration, how to document and release your work, and how to make good choices when the ecosystem gives you several options. Where possible, I try to explain the tradeoffs rather than just prescribing a single answer.

Much of what is in here comes from years of working with Rust professionally and learning from open-source projects, and from studying how well-run projects in the ecosystem handle the same problems. I have tried to make it information-dense and practical: something you can read through once to get the lay of the land, and come back to later when you need to set up a specific tool or make a specific decision.

Rust is not perfect, but it is a language that rewards investment. The tooling is good, the ecosystem is maturing rapidly, and the community cares deeply about quality. I hope this book helps you build on that foundation, and helps give you the tools you need to structure your project well, so that you can focus on writing great code and get the most out of the ecosystem.

Introduction

Once you are comfortable with the Rust language, the next set of questions is about the ecosystem and the practices around it. How do you structure a project that will grow over time? Which libraries do you reach for when you need logging, serialization, or error handling? How do you set up CI so that formatting, linting, testing, and auditing happen automatically? How do you release your work — to crates.io, as a container image, as a system package?

This book is organized around those questions. Each chapter covers a different aspect of running a Rust project:

- Development Environment covers editor setup and toolchain configuration.

- Build System covers Cargo, Nix, Bazel, and other build tools, and how Rust code can fit into projects written in other languages.

- Organization explains how to structure a codebase as it grows: when to split into multiple crates, how to use workspaces, and how to lay out a repository.

- Ecosystem surveys popular Rust libraries for common problems: logging, errors, serialization, concurrency, and more. It explains the tradeoffs between competing options so you can pick the right one.

- Interop covers calling C, C++, Python, and other languages from Rust, including the FFI frameworks available and common hazards to watch for.

- Checks explains how to automatically verify properties of your code: formatting, linting, dependency auditing, semver correctness, and more. These are the tools that catch problems before they reach code review.

- Testing covers strategies for verifying correctness, from unit tests and property testing to fuzzing, mutation testing, and dynamic analysis with tools like Miri.

- Measure explains how to collect metrics about your codebase: test coverage, benchmarking, and memory profiling.

- Building covers what happens during

cargo build: reducing binary size, tuning compiler output for performance, cross-compiling for other platforms, and caching builds. - Documentation covers how to write and publish documentation, from API-level rustdoc to standalone books with mdBook and architecture decision records.

- Releasing explains the process of shipping your work to users: versioning, changelogs, publishing to crate registries, building container images, and creating system packages.

- Continuous Integration ties the preceding chapters together by showing how to run checks, tests, and builds automatically on every commit, with examples for GitHub Actions and GitLab CI.

- Tools covers general-purpose development tools that are useful across workflows: code search, task runners, macro expansion, and debuggers.

Not every chapter will be relevant to every project, and you do not need to adopt everything at once. Chapters are self-contained, so you can read the book cover to cover or use it as a reference: jump to whichever chapter addresses the problem you are facing now, and come back for others as the need arises. The Resources chapter lists books and courses for learning the Rust language itself.

Resources

The rest of this book assumes you are comfortable with Rust as a language. If you are still learning, or want to deepen your understanding of specific areas like async or atomics, the resources below are a good place to start.

Books

The Rust Programming Language, 2nd Edition by Steve Klabnik and Carol Nichols

The official book of the Rust programming language. Covers the language and toolchain from the ground up, with example projects that show how concepts fit together in practice. The starting point for most Rust developers. Also available in print.

Effective Rust by David Drysdale

Hands-on recommendations for writing idiomatic Rust code, organized as a series of actionable items covering types, traits, error handling, dependencies, and tooling. Particularly strong on the “why” behind Rust idioms. Also available in print.

Rust for Rustaceans by Jon Gjengset

A deep dive for developers who already know the basics. Covers designing interfaces, writing effective tests, unsafe code, async internals, and performance. Contains one of the clearest explanations of how async works under the hood.

Rust Atomics and Locks by Mara Bos

Covers low-level concurrency: atomics, memory ordering, and lock implementations. Essential reading if you need to implement custom synchronization primitives or understand why certain concurrent patterns are safe in Rust and others are not.

Rust Design Patterns (archived) by Rust Community

A community-maintained catalogue of design patterns, anti-patterns, and idioms specific to Rust. Each entry includes rationale explaining why a pattern works well or why an anti-pattern should be avoided.

The Rustonomicon by The Rust Project

The official guide to unsafe Rust. Covers raw pointers, transmutes, uninitialized memory, the Drop Check, and the exact rules for what constitutes undefined behavior. Essential reading if you work with FFI (see the Interop chapter) or need to implement data structures that require unsafe code.

Rust by Example by The Rust Community

A companion to The Rust Programming Language that teaches through annotated, runnable examples rather than long explanations. Each concept is demonstrated with code you can modify and run in the browser. A good option if you prefer learning by doing.

The Cargo Book by The Rust Project

The official reference for Cargo: dependency management, workspace configuration, build scripts, feature flags, publishing, and custom profiles. Since nearly every chapter in this book involves Cargo in some way, this is a useful reference to keep at hand.

For more Rust books, see The Little Book of Rust Books and The Rust Bookshelf.

Courses

Comprehensive Rust by Google

A multi-day Rust training course developed by Google’s Android team. Covers the language from basics through advanced topics like async and unsafe, with exercises throughout. A good option if you prefer structured, classroom-style learning.

Zero to Production in Rust by Luca Palmieri

A practical guide that walks through building a production-ready web application in Rust, covering project setup, database migrations, logging, error reporting, and deployment. Good for seeing how the tools and practices discussed in this book come together in a real project.

Articles

These articles cover similar ground to this book, approaching Rust project practices from different angles. Reading them alongside this book gives you a broader perspective on where the Rust community has converged and where opinions still differ.

One Hundred Thousand Lines of Rust by Alex Kladov

Lessons from maintaining several mid-sized Rust projects, including rust-analyzer. Covers documentation, testing strategies, build times, and project organization. Many of the recommendations align with what this book covers, but from the perspective of someone maintaining widely-used developer tools.

Basic Things by Alex Kladov

Argues that foundational infrastructure (documentation, code review, testing, reproducible builds, metrics) compounds over time and becomes a major multiplier as projects grow. A good companion to the Checks and Testing chapters of this book.

My Ideal Rust Workflow by Amos Wenger

A detailed walkthrough of one developer’s professional Rust setup, covering editor configuration, automated checks with Clippy and cargo-hack, CI pipelines, and private infrastructure. Useful for seeing how the individual tools discussed in this book fit together in a cohesive workflow.

Good Practices for Writing Rust Libraries by Pascal Hertleif

A practical checklist for publishing Rust libraries: code quality tools (rustfmt, Clippy, lints), project metadata, README conventions, CI setup, and documentation deployment. Written in 2015 but most of the advice remains relevant.

Describes the testing strategy for Sciagraph, a Python memory profiler built with Rust. Covers coverage marks (verifying specific code paths are hit), property-based testing with proptest, end-to-end tests in both debug and release modes, and panic injection testing. Also discusses choosing Rust for memory safety, wrapping unsafe APIs in safe interfaces, and environmental assertions at startup to catch configuration mismatches.

Videos

Setting up CI and Property Testing for a Rust Crate by Jon Gjengset

Jon walks through setting up a CI pipeline and property testing for one of his crates, explaining his reasoning at each step. A good complement to the Testing and CI chapters of this book, as it shows the process of making these decisions in real time.

Development Environment

This chapter explains what you need to get started writing a Rust project. It outlines how you can install a Rust toolchain, and what editors or IDEs you can use to write Rust code. If you already have a Rust toolchain installed and you have an editor or an IDE that you are comfortable using, you can safely skip this chapter.

Fundamentally, you need two pieces of software to get started with your Rust project:

- Rust toolchain: with the components needed for formatting, linting Rust code, in the correct version, and with the right targets.

- Code editor: with support for Rust through syntax highlighting and ideally

integration with

rust-analyzer.

This section outlines how you can set up your environment to be able to write Rust productively, by showing you ways to get a Rust toolchain installed and by examining some popular code editors used by the Rust community.

A lot of this book is very command-line centric and as such you may find the experience of using these tools slightly easier on UNIX-like operating systems such as Linux or macOS. This should not come as a surprise, as the majority of Rust developers work on and target Linux according to the 2023 survey. However, Rust loves Windows too, and most of the tools explained here should work on any platform. I try to point out any commands that either don’t work on natively on Windows or require special setup. You can always try WSL2 to run things if you run into any issues.

Rust Toolchain

The bare minimum you need to get started with to write and build Rust code is a

text editor and rustc. However, to do meaningful work, you will likely also

need Cargo and some way to manage it, for example to update your Rust toolchains

or install support for other targets like WebAssembly.

Rust toolchain consists of:

| Item | Description |

|---|---|

rustc | Rust compiler |

cargo | Rust package manager and build system |

rustfmt | Rust code formatter |

clippy | Rust linter, and automatically fix code issues |

rust-std | Rust standard library source code, used when requesting rustc to build it from source |

rust-docs | Documentation for Rust’s standard library |

There are different release channels. The stable channel tracks stable Rust

releases, such as 1.80, while the nightly channel tracks nightly releases

that come with more features, but which might be unstable. Generally, you want

to stick to the stable release channels, unless you have a specific reason to

use the nightly ones (for example, you need to use a feature that is

unstable).

Depending on what you are writing software for, you may also want to install

toolchains for different targets. For example, you may need the targets

x86_64-unknown-linux-gnu to build software for Linux, wasm32-unknown-unknown

to build software for WebAssembly targets, or thumbv6m-none-eabi to target

Cortex-M0 ARM microcontrollers.

Your operating system might have Rust available in its package manager, however you should be careful about using it. The version available might be outdated, or there might not be a way to use Rust nightly or install a different target. For some tasks, such as writing WebAssembly web frontends in Rust or doing embedded development, you will need to install additional targets so that Rust knows how to compile your code.

You will likely want some way to not only install Rust, but also manage the components and targets, update the toolchain and have the ability to install different versions of the toolchain side-by-side to work on your project.

Rustup

The recommended approach to install and manage Rust toolchains, components and targets is Rustup. It lets you install different versions of the toolchain side-by-side, switch between them either explicitly or with some configuration inside your project.

To install rustup on Linux, you can run the following command. If you are

using Windows, you can find installation instructions on the website.

curl --proto '=https' --tlsv1.2 -sSf https://sh.rustup.rs | sh

With Rustup installed, you should now have access to Cargo and you can use it to manage your Rust installation. Here are some useful commands for reference:

# install a different version of the toolchain (can also give a specific version)

rustup install nightly

rustup install 1.80.0

# install a target

rustup target add wasm32-unknown-unknown

# update your Rust toolchain

rustup update

When you use Cargo, Rustup will use your default toolchain. For most of your

development, this should be sufficient. However, you can always override this to

use a specific toolchain, for example to use nightly for a specific command by

adding +<version> to any command:

# build and run tests using the nightly toolchain

cargo +nightly test

If you have Rustup installed and Cargo works, then you are set up for using Rust.

Nix

While Rustup is the most popular and preferred way to manage Rust toolchains, it is not the only way you can use to install and manage Rust toolchains. Another popular tool used by Rustaceans to manage their toolchains is Nix, which is a declarative package manager and build system.

Todo

Editors and IDEs

Preferences for development environments amongst developers varies widely. Some developers prefer light-weight editors such as vim, neovim, or helix. These have the advantage of being fast and portable, tend to be easy to extend and rely on keyboard shortcuts to avoid being slowed down by using a mouse. Especially terminal-native developers tend to prefer enjoy these editors, because it means they can do all of their development in the terminal and can even use these editors remotely over SSH.

The other camp likes using IDEs, which are graphical tools for writing code. They tend to integrate very well into the programming languages and have compelling features such as jump-to-definition, show type information or have debugging support built-in. IDEs used to have a bad reputation for being rigid, but modern ones are just as extensible as command-line editors.

This survey shows that the two most popular editors for Rust are VS Code, and Vi-family editors (which I group together as Vim). The Zed editor is also popular, but did not appear in this survey, likely because it was not stable at the time the survey was run.

We can cluster the editors into two groups:

- Graphical IDEs: Includes VS Code, Rust Rover, Sublime Text, Visual Studio, Xcode, Atom.

- Terminal-based editors: Vim, Helix, Emacs

In general, Graphical IDEs are more friendly to beginners. For this reason, the editors discussed in this chapter focusses mainly on these. The Terminal-based editors have their own advantages, but they require more learning and unless you are already familiar with them, it likely does not make sense to pick them up.

In the subsections of this chapter, we take a look at three editors that yield a good developer experience:

- VS Code: Partially open-source editor developed by Microsoft, has extensive plugin functionality, basically a clone of the once-popular Atom editor.

- Zed: Open-source editor written in Rust, comes with Rust support out of the box. Not available for Windows currently.

- Rust Rover: Commercial, but free-to-use for noncommerical applications, developed by JetBrains.

Rust Analyzer

Language servers are tools that parse and understand programming languages, and expose this data to IDEs. Unlike compilers, which run once and produce a binary, language servers are designed to run continuously, generate metadata such as inferred types of values, and implement high-level operations such as refactoring code.

The original language server for Rust was called Rust Language Server, and it used rustc to parse projects. This approached worked initially, but there were issues with latency. Additionally, rustc is not great at handling incomplete or broken code, which is important for language servers as they run while you write code. As a result, RLS was deprecated in 2022.

- graph of rls architecture

As a result, a new approach was taken that used a custom parser to be more

error-resiliant than rustc, called

rust-analyzer.

- graph of rust-analyzer architecture

The core piece that makes Rust IDEs possible is thus rust-analyzer, which is a project that understands Rust projects and implements the Language Server Protocol, which is a way for IDEs to understand them too and display type annotations, warnings, errors, suggestions.

In general, any IDE that supports the LSP protocol can be used for Rust development using rust-analyzer. The only exception is Rust Rover, which implements it’s own parser for Rust projects.

In general, you don’t need to know much about Rust Analyzer to use it. In fact,

many Rust IDEs even bundle it, and will manage and update it for you. You will

not even be aware that it is running in the background. But there are some

situations where you might need to be aware of its existence. If you use build

systems other than Cargo to build your Rust project, for example, then Rust

Analyzer might not be able to analyze your project. There might also be cases

where it has bugs, because it uses a different parser for Rust than rustc has.

Reading

The Rustup Book by Rust Language

Book for the Rustup tool used by the Rust community to install and manage Rust toolchains. It explains core concepts such as channels, toolchains, components and profiles, how to configure Rustup to use specific versions of the toolchain on a per-project basis.

Rust Analyzer Manual by Rust Analyzer

Explains what rust-analyzer is, and how to use it. It has instructions for the

best way to install it for every editor it supports, and outlines ways you can

configure it for your project.

Why LSP? by Alex Kladov

Alex explains what problem LSPs solve.

LSP could have been better by Alex Kladov

This article discusses architectural aspects of LSPs, that Alex does not find as brilliant.

LSP: The good, the bad and the ugly by Michael Peyton Jones

Improving ‘Extract Function’ in Rust Analyzer by Dorian Scheidt

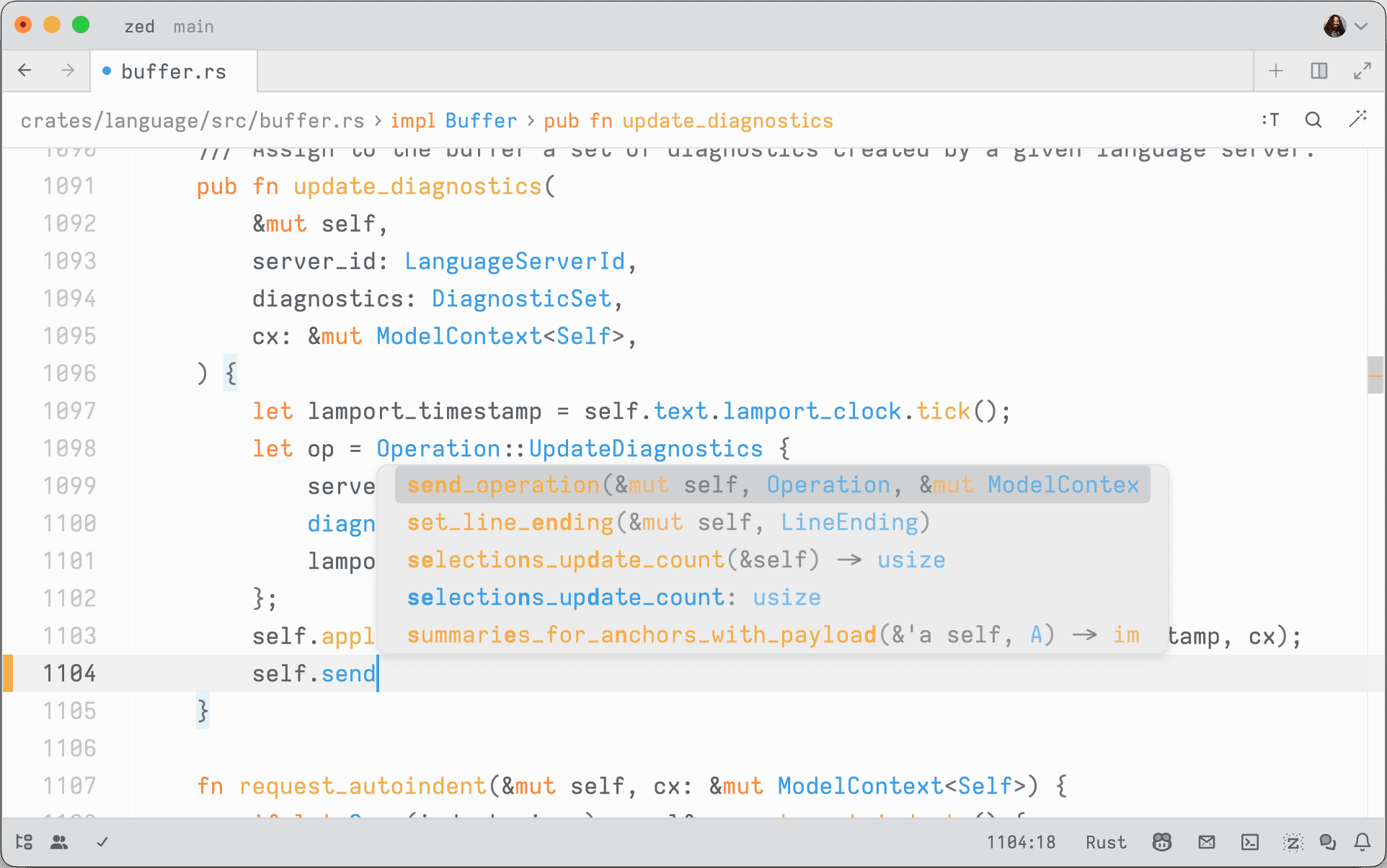

Zed

Zed is a code editor that comes with support for Rust out-of-the-box. It deserves a special mention because it itself is written in Rust. It is fairly minimalist, offering limited support for extensions (only themes, grammars and language servers can be extended). But the advantage is that it requires no setup, it understands and can work on Rust projects with no configuration.

If you just want an editor that you can use to write Rust code, and you only

need features that rust-analyzer comes with out of the box, then it is a good

choice. It is also open-source.

- screenshots of all features zed has for Rust projects

Features

Notes

Notably, the team behind Zed runs a blog documenting their experience building a cross-platform code editor in Rust, with deep dives into challenges they have faced in doing so and how they managed to tackle them. A lot of the articles there are good reading for anyone who is interested in Rust, cross-platform development, real-world asynchronous applications and the like.

Visual Studio Code

- screenshot of vscode (light/dark mode)

Visual Studio Code is a clone of the previously popular Atom editor that is sponsored by Microsoft. Compared to Visual Studio, it is lightweight and relatively fast, and has the advantage of being easily extensible. It has a vast ecosystem of plugins for various programming languages, including Rust.

Plugins

rust-analyzer

https://code.visualstudio.com/docs/languages/rust

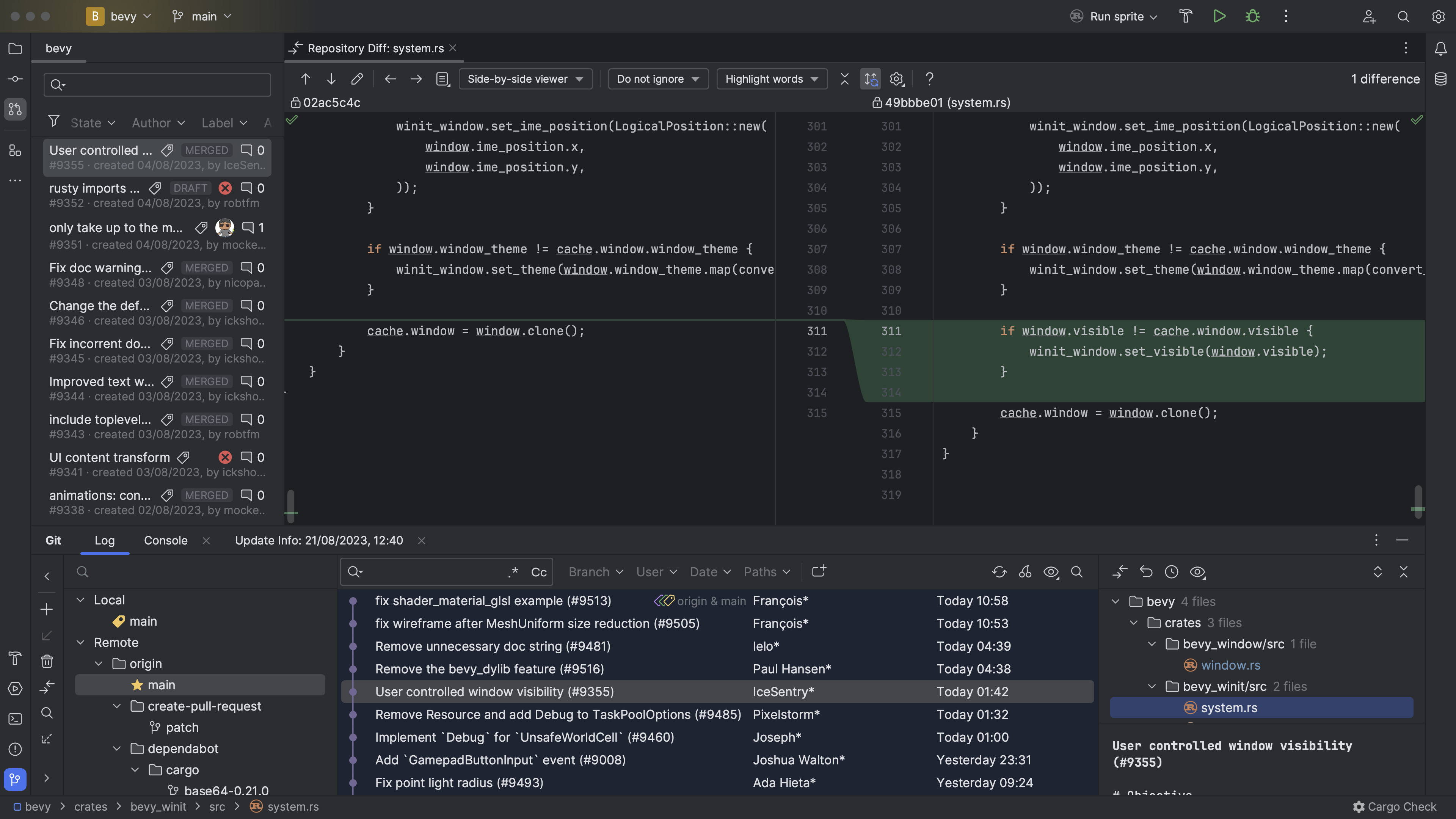

RustRover

RustRover is a commercial IDE offered by JetBrains. It has a deeper integration and more intelligent features than the other IDEs listed here, but is only free for personal use.

It is being actively developed, and new features that make writing Rust code and managing Rust projects are constantly added. The advantage is that it is all integrated and works out-of-the-box, unlike Visual Studio Code which needs some custom plugins that achieve what it can do.

The only downside of it is that it is commercial, meaning that it is not open-source.

Build system

Cargo is a great tool for building, cross-compiling and testing Rust software. It supports installing plugins that extend its functionality, many of which are discussed in this book. If your project consists only of Rust crates, then Cargo is all you need:

Things start to get tricky when you involve other languages (such as mixing Rust with C, C++, TypeScript) or when parts of your project need to be compiled for different targets (for example, compiling some crates to WebAssembly and embedding the output into other builds).

Example architectures

For example, some projects may need to interface with some legacy C/C++ code. In this case, building might involve compiling the library first:

Another common pattern when building full-stack web applications with Rust is that you might write the frontend in Rust and need to compile it to WebAssembly, and the backend in Rust. You want the Rust backend to serve the frontend, so it requires the WebAssembly output as a build input:

If you build a traditional web application with a TypeScript frontend and a Rust backend, you may need to run a TypeScript compiler for some part of your code and use the output as the input for your backend.

Other configurations are also possible, it depends on your particular need.

Build Systems

Build systems are high-level tools to orchestrate the build process. They track tasks and dependencies, and make sure that the build steps are run in the right order and rerun when any of the inputs have changed.

Good build systems will enforce hygiene by sandboxing build steps to make sure you do not accidentally depend on inputs you have not declared. This helps to avoid the “it works on my machine” syndrome, where your code accidentally depends on some system state that is present on your machine but not on others’.

However, build systems become interesting to your Rust project when one of three things happen:

- Inside your project, you have multi-language components. For example, a frontend written in TypeScript, a backend component written in Kotlin, a C library, some Python tooling.

- Inside your project, you have cross-target dependencies. For example, you

have a project fully written in Rust, and the backend wants to embed the

frontend compiled to WebAssembly using a tool such as

trunkfor ease of deployment. - You depend on some external dependency which is not written in Rust, and

you want to be sure you can use it reproducibly on all platforms. For example,

you depend on the presence of

sqlitein a specific version.

Many build systems also offer fully reproducible builds by requiring all build inputs and tools to be pinned down by hash, which enables distributed caching which is a big quality of life improvement for developers as it leads to faster development times.

This chapter discusses some build systems that play nice with Rust. Note that build systems are not necessarily mutually-exclusive: most of the time, even when using a build system that is not Cargo, you will still have the necessary Cargo manifests in the project that allows standard Cargo tooling to work.

Reading

The convergence of compilers, build systems and package managers by Edward Z. Yang

Edward explains how build systems, compilers and package managers seem to

converge. This is certainly the case for Rust, which has Cargo which acts as a

build system (cargo build) and package manager (cargo install). He explains

that this is not an isolated phenomenon, but inherent. It appears that we are

heading towards a more integrated approach.

Build Systems and Build Philosophy by Erik Kuefler

This chapter in the book discusses why build systems are vital in scaling software development, because they ensure that software can be built correctly on a number of different systems and architectures.

Chapter 4: Multi-language build system options by cxx crate

The CXX crate’s documentation discusses build system options for projects that mix Rust and C++. It recommends Cargo for projects without an existing C++ build system, Bazel for multi-language projects, and CMake for codebases already using it.

Build systems à la carte by Andrey Mokhov, Neil Mitchell and Simon Peyton-Jones

Paper which explain build systems, and how they work. It takes popular build systems apart and explains their properties. A useful paper for anyone trying to achieve a deep understanding of what build systems are and how they work.

Merkle trees and build systems by David Röthlisberger

David explores using Merkle trees to track build outputs. By storing build artifacts in OSTree (a content-addressable store where each directory’s hash is derived from its contents), any change to a file automatically propagates up through the tree. The build system can then use a single root hash to determine whether a rebuild is needed, enabling deduplication, automatic incremental rebuilds, and passing intermediate outputs between build steps without explicit naming.

Amazon’s Build System (archived) by Carl Meyers

Carl describes Brazil, Amazon’s internal build system. Brazil enforces reproducible builds through strict dependency isolation (only explicitly declared dependencies are available), uses “version sets” to manage compatible collections of package versions across hundreds of services, and separates interface versions from concrete build versions. The article argues that these properties are inevitable discoveries of any large engineering organization.

Build System Schism: The Curse of Meta Build Systems (archived) by Gavin D. Howard

Gavin gives a summary of the evolution of build systems, into the modern ones he calls meta build systems. He summarizes which features they have, and argues that Turing-completeness is a property that is required for a good build system.

Cargo

Cargo is the default build system for Rust projects. It makes it easy to create

build and test Rust code, manages dependencies from crates.io, and allows

you to publish your own crates there. It uses semantic versioning to resolve

dependency version from constraints you define and uses a lockfile to ensure you

are always building with the same dependency versions. Since rustc is

LLVM-based, it is also easy to cross-compile your Rust code for other targets,

see the list of supported Rust targets.

Cargo supports installing other tools that integrate into it and extend it with

new subcommands. This guide mentions several of such tools, such as cargo-hack

or cargo-llvm-cov.

One nice property of having Cargo as the default build system for all Rust

projects is that you can typically clone any repository that contains a Rust

crate and run cargo build to build it, or cargo test to run tests. This is

quite different to languages such as C, C++ or JavaScript that have a more

fragmented build ecosystem.

What Cargo Lacks

If you only use built-in commands and only build Rust code, then Cargo is a great build system for Rust projects. However, there are some features it does not have.

If you rely on plugins to build your project, such as trunk for building

WebAssembly-powered web frontend applications powered by Rust, Cargo will not

install it automatically. Rather, developers need to install it manually by

running cargo install trunk.

If you rely on native dependencies, such as OpenSSL or other libraries, Cargo

will not handle installing them on your behalf. There are some workarounds for

this, for example some crates like rusqlite ship the C code and have a feature

flag where Cargo will build the required library from source if you request it.

If you need to execute build steps, such as compiling C code or your have some parts of your project that use for example JavaScript, there is only rudimentary support for doing so with Cargo.

In short, Cargo is great at all things Rust, but it does not help you much if you mix other languages into your project. And that is by design: Cargo’s goal is not to reinvent the world. It does one thing, and it does it well, which is build Rust code.

The next sections discuss some approaches that you can use to use Cargo in situations that it is not designed for, but that yet seem to work.

Complex build steps

Cargo is great at building Rust code, but has few features for building projects that involve other languages. This makes sense, because such functionality is not needed by it.

Cargo does come with some support for running arbitrary steps at build time, through the use of build scripts. These are little Rust programs that you can write that are executed at build time and let you do anything you like, including building other code. It also supports linking with C/C++ libraries by having these build scripts emit some data that Cargo parses.

The other sections of this chapter are only relevant to you if your project

consists of a mixture of languages, and building it is sufficiently complex that

it cannot trivially be expressed or implemented in a build.rs file (such as:

it needs external dependencies).

build.rs to define custom build actions

If you have a few more complex steps that you need to do when building your code, you can always use a build script.

Build scripts in Cargo are little Rust programs defined in a build.rs in the

crate root which are compiled and run before your crate is compiled. They are

able to do some build steps (such as compile an external, vendored C library)

and they can emit some information to Cargo, for example to tell it to link

against a specific library.

Build scripts receive a number of environment variables as inputs, and output some metadata that controls Cargo’s behaviour.

A simple build script might look like this:

fn main() {

}For common tasks such as building C code, generating bindings for native libraries there are crates that allow you to write build scripts easily, these are presented in the next sections.

Compiling C/C++ Code

If you have some C or C++ code that you want built with your crate, you can use

the cc crate to do so. It is a helper library that you can call inside

your build script to run the native C/C++ compiler to compile some code, link it

into a static archive and tell Cargo to link it when building your crate. It

also has support for compiling CUDA code.

A basic use of this crate looks by adding something like this to the main

function of your build script:

#![allow(unused)]

fn main() {

cc::Build::new()

.file("foo.c")

.file("bar.c")

.compile("foo");

}The crate will take care of the rest of finding a suitable compiler and

communicating to Cargo that you wish to link the foo library.

Here is an example of how this looks like. In this crate, a build script is used to compile and link some C code, and the unsafe C API is wrapped and exposed as a native Rust function.

- src/

/target

# This file is automatically @generated by Cargo.

# It is not intended for manual editing.

version = 3

[[package]]

name = "anstream"

version = "0.6.15"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "64e15c1ab1f89faffbf04a634d5e1962e9074f2741eef6d97f3c4e322426d526"

dependencies = [

"anstyle",

"anstyle-parse",

"anstyle-query",

"anstyle-wincon",

"colorchoice",

"is_terminal_polyfill",

"utf8parse",

]

[[package]]

name = "anstyle"

version = "1.0.8"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "1bec1de6f59aedf83baf9ff929c98f2ad654b97c9510f4e70cf6f661d49fd5b1"

[[package]]

name = "anstyle-parse"

version = "0.2.5"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "eb47de1e80c2b463c735db5b217a0ddc39d612e7ac9e2e96a5aed1f57616c1cb"

dependencies = [

"utf8parse",

]

[[package]]

name = "anstyle-query"

version = "1.1.1"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "6d36fc52c7f6c869915e99412912f22093507da8d9e942ceaf66fe4b7c14422a"

dependencies = [

"windows-sys",

]

[[package]]

name = "anstyle-wincon"

version = "3.0.4"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "5bf74e1b6e971609db8ca7a9ce79fd5768ab6ae46441c572e46cf596f59e57f8"

dependencies = [

"anstyle",

"windows-sys",

]

[[package]]

name = "cc"

version = "1.1.15"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "57b6a275aa2903740dc87da01c62040406b8812552e97129a63ea8850a17c6e6"

dependencies = [

"shlex",

]

[[package]]

name = "clap"

version = "4.5.16"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "ed6719fffa43d0d87e5fd8caeab59be1554fb028cd30edc88fc4369b17971019"

dependencies = [

"clap_builder",

"clap_derive",

]

[[package]]

name = "clap_builder"

version = "4.5.15"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "216aec2b177652e3846684cbfe25c9964d18ec45234f0f5da5157b207ed1aab6"

dependencies = [

"anstream",

"anstyle",

"clap_lex",

"strsim",

]

[[package]]

name = "clap_derive"

version = "4.5.13"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "501d359d5f3dcaf6ecdeee48833ae73ec6e42723a1e52419c79abf9507eec0a0"

dependencies = [

"heck",

"proc-macro2",

"quote",

"syn",

]

[[package]]

name = "clap_lex"

version = "0.7.2"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "1462739cb27611015575c0c11df5df7601141071f07518d56fcc1be504cbec97"

[[package]]

name = "colorchoice"

version = "1.0.2"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "d3fd119d74b830634cea2a0f58bbd0d54540518a14397557951e79340abc28c0"

[[package]]

name = "heck"

version = "0.5.0"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "2304e00983f87ffb38b55b444b5e3b60a884b5d30c0fca7d82fe33449bbe55ea"

[[package]]

name = "is_terminal_polyfill"

version = "1.70.1"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "7943c866cc5cd64cbc25b2e01621d07fa8eb2a1a23160ee81ce38704e97b8ecf"

[[package]]

name = "levenshtein"

version = "0.1.0"

dependencies = [

"cc",

"clap",

"libc",

]

[[package]]

name = "libc"

version = "0.2.158"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "d8adc4bb1803a324070e64a98ae98f38934d91957a99cfb3a43dcbc01bc56439"

[[package]]

name = "proc-macro2"

version = "1.0.86"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "5e719e8df665df0d1c8fbfd238015744736151d4445ec0836b8e628aae103b77"

dependencies = [

"unicode-ident",

]

[[package]]

name = "quote"

version = "1.0.37"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "b5b9d34b8991d19d98081b46eacdd8eb58c6f2b201139f7c5f643cc155a633af"

dependencies = [

"proc-macro2",

]

[[package]]

name = "shlex"

version = "1.3.0"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "0fda2ff0d084019ba4d7c6f371c95d8fd75ce3524c3cb8fb653a3023f6323e64"

[[package]]

name = "strsim"

version = "0.11.1"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "7da8b5736845d9f2fcb837ea5d9e2628564b3b043a70948a3f0b778838c5fb4f"

[[package]]

name = "syn"

version = "2.0.77"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "9f35bcdf61fd8e7be6caf75f429fdca8beb3ed76584befb503b1569faee373ed"

dependencies = [

"proc-macro2",

"quote",

"unicode-ident",

]

[[package]]

name = "unicode-ident"

version = "1.0.12"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "3354b9ac3fae1ff6755cb6db53683adb661634f67557942dea4facebec0fee4b"

[[package]]

name = "utf8parse"

version = "0.2.2"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "06abde3611657adf66d383f00b093d7faecc7fa57071cce2578660c9f1010821"

[[package]]

name = "windows-sys"

version = "0.52.0"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "282be5f36a8ce781fad8c8ae18fa3f9beff57ec1b52cb3de0789201425d9a33d"

dependencies = [

"windows-targets",

]

[[package]]

name = "windows-targets"

version = "0.52.6"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "9b724f72796e036ab90c1021d4780d4d3d648aca59e491e6b98e725b84e99973"

dependencies = [

"windows_aarch64_gnullvm",

"windows_aarch64_msvc",

"windows_i686_gnu",

"windows_i686_gnullvm",

"windows_i686_msvc",

"windows_x86_64_gnu",

"windows_x86_64_gnullvm",

"windows_x86_64_msvc",

]

[[package]]

name = "windows_aarch64_gnullvm"

version = "0.52.6"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "32a4622180e7a0ec044bb555404c800bc9fd9ec262ec147edd5989ccd0c02cd3"

[[package]]

name = "windows_aarch64_msvc"

version = "0.52.6"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "09ec2a7bb152e2252b53fa7803150007879548bc709c039df7627cabbd05d469"

[[package]]

name = "windows_i686_gnu"

version = "0.52.6"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "8e9b5ad5ab802e97eb8e295ac6720e509ee4c243f69d781394014ebfe8bbfa0b"

[[package]]

name = "windows_i686_gnullvm"

version = "0.52.6"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "0eee52d38c090b3caa76c563b86c3a4bd71ef1a819287c19d586d7334ae8ed66"

[[package]]

name = "windows_i686_msvc"

version = "0.52.6"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "240948bc05c5e7c6dabba28bf89d89ffce3e303022809e73deaefe4f6ec56c66"

[[package]]

name = "windows_x86_64_gnu"

version = "0.52.6"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "147a5c80aabfbf0c7d901cb5895d1de30ef2907eb21fbbab29ca94c5b08b1a78"

[[package]]

name = "windows_x86_64_gnullvm"

version = "0.52.6"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "24d5b23dc417412679681396f2b49f3de8c1473deb516bd34410872eff51ed0d"

[[package]]

name = "windows_x86_64_msvc"

version = "0.52.6"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "589f6da84c646204747d1270a2a5661ea66ed1cced2631d546fdfb155959f9ec"

[package]

name = "levenshtein"

version = "0.1.0"

edition = "2021"

[dependencies]

# used to parse command-line arguments

clap = { version = "4.5.16", features = ["derive"] }

# used for FFI interface (defines size_t)

libc = "0.2.158"

[build-dependencies]

# used to build the levenshtein.c library

cc = "1.1.15"

# Levenshtein

Wrapper around [levenshtein.c][], a C library to compute the Levenshtein

distance between two strings. Also contains a command-line tool to compute the

distance for two strings passed as command-line parameters.

## Examples

You can build the library using Cargo. Ensure that you have a C compiler installed,

as this crate relies on the [cc][] crate to build the library.

```

$ cargo run -- "hello" "hello"

0

$ cargo run -- "kitten" "sitting"

3

```

[levenshtein.c]: https://github.com/wooorm/levenshtein.c

[cc]: https://docs.rs/cc/latest/cc/

/// Compiles the `levenshtein.c` library using the C compiler and instructs Cargo to link the

/// resulting archive.

fn main() {

cc::Build::new().file("src/levenshtein.c").compile("levenshtein");

}

// `levenshtein.c` - levenshtein

// MIT licensed.

// Copyright (c) 2015 Titus Wormer <tituswormer@gmail.com>

#include <string.h>

#include <stdlib.h>

#include <stdint.h>

#include "levenshtein.h"

// Returns a size_t, depicting the difference between `a` and `b`.

// See <https://en.wikipedia.org/wiki/Levenshtein_distance> for more information.

size_t

levenshtein_n(const char *a, const size_t length, const char *b, const size_t bLength) {

// Shortcut optimizations / degenerate cases.

if (a == b) {

return 0;

}

if (length == 0) {

return bLength;

}

if (bLength == 0) {

return length;

}

size_t *cache = calloc(length, sizeof(size_t));

size_t index = 0;

size_t bIndex = 0;

size_t distance;

size_t bDistance;

size_t result;

char code;

// initialize the vector.

while (index < length) {

cache[index] = index + 1;

index++;

}

// Loop.

while (bIndex < bLength) {

code = b[bIndex];

result = distance = bIndex++;

index = SIZE_MAX;

while (++index < length) {

bDistance = code == a[index] ? distance : distance + 1;

distance = cache[index];

cache[index] = result = distance > result

? bDistance > result

? result + 1

: bDistance

: bDistance > distance

? distance + 1

: bDistance;

}

}

free(cache);

return result;

}

size_t

levenshtein(const char *a, const char *b) {

const size_t length = strlen(a);

const size_t bLength = strlen(b);

return levenshtein_n(a, length, b, bLength);

}

#ifndef LEVENSHTEIN_H

#define LEVENSHTEIN_H

#include <stddef.h>

// `levenshtein.h` - levenshtein

// MIT licensed.

// Copyright (c) 2015 Titus Wormer <tituswormer@gmail.com>

// Returns a size_t, depicting the difference between `a` and `b`.

// See <https://en.wikipedia.org/wiki/Levenshtein_distance> for more information.

#ifdef __cplusplus

extern "C" {

#endif

size_t

levenshtein(const char *a, const char *b);

size_t

levenshtein_n (const char *a, const size_t length, const char *b, const size_t bLength);

#ifdef __cplusplus

}

#endif

#endif // LEVENSHTEIN_H

//! The Levenshtein distance measures how similar two words are, by how many substitutions are

//! needed to get from one word to the other. This Crate wraps a C library that implements this

//! algorithm in a safe Rust interface.

/// Raw access to the unsafe C API of the levenshtein library.

pub mod raw {

use libc::size_t;

use std::ffi::c_char;

extern "C" {

/// Raw binding to the C `levenshtein_n` function.

///

/// `a` and `b` must be valid pointers to character arrays, and `a_length` and `b_length`

/// their lengths respectively.

pub fn levenshtein_n(

a: *const c_char,

a_length: size_t,

b: *const c_char,

b_length: size_t,

) -> size_t;

}

}

/// Computes the Levenshtein distance between the strings `a` and `b`.

///

/// # Examples

///

/// The Levenshtein distance between two equal words is zero.

///

/// ```

/// # use levenshtein::levenshtein;

/// assert_eq!(levenshtein("hello", "hello"), 0);

/// ```

///

/// The Levenshtein distance between two words that have a single letter substituted is one.

///

/// ```

/// # use levenshtein::levenshtein;

/// assert_eq!(levenshtein("hello", "hallo"), 1);

/// ```

pub fn levenshtein(a: &str, b: &str) -> u64 {

use std::ffi::c_char;

let result = unsafe {

raw::levenshtein_n(

a.as_ptr() as *const c_char,

a.len(),

b.as_ptr() as *const c_char,

b.len(),

)

};

result as u64

}

#[test]

fn test_levenshtein() {

macro_rules! assert_distance {

($a:expr, $b:expr, $d:expr) => {

assert_eq!(levenshtein($a, $b), $d);

};

}

assert_distance!("", "a", 1);

assert_distance!("a", "", 1);

assert_distance!("", "", 0);

assert_distance!("levenshtein", "levenshtein", 0);

assert_distance!("sitting", "kitten", 3);

assert_distance!("gumbo", "gambol", 2);

assert_distance!("saturday", "sunday", 3);

// It should match case sensitive.

assert_distance!("DwAyNE", "DUANE", 2);

assert_distance!("dwayne", "DuAnE", 5);

// It not care about parameter ordering.

assert_distance!("aarrgh", "aargh", 1);

assert_distance!("aargh", "aarrgh", 1);

// Some tests form `hiddentao/fast-levenshtein`.

assert_distance!("a", "b", 1);

assert_distance!("ab", "ac", 1);

assert_distance!("ac", "bc", 1);

assert_distance!("abc", "axc", 1);

assert_distance!("xabxcdxxefxgx", "1ab2cd34ef5g6", 6);

assert_distance!("xabxcdxxefxgx", "abcdefg", 6);

assert_distance!("javawasneat", "scalaisgreat", 7);

assert_distance!("example", "samples", 3);

assert_distance!("sturgeon", "urgently", 6);

assert_distance!("levenshtein", "frankenstein", 6);

assert_distance!("distance", "difference", 5);

}

use clap::Parser;

#[derive(Parser)]

struct Options {

a: String,

b: String,

}

fn main() {

let options = Options::parse();

let distance = levenshtein::levenshtein(&options.a, &options.b);

println!("{distance}");

}

Note that in order to make the C function “visible” from Rust, you need to

declare it in an extern "C" block. It needs a function definition that matches

the one in the C header. Writing this by hand is error-prone, and can lead to

unsafety issues.

This example also shows how this unsafe C function is wrapped into a safe Rust function. Doing so involves dealing with raw pointers, and it is easy to get something wrong. It is important to write good Unit Tests, and often it can help to use Dynamic Analysis to make sure you did it correctly.

Compiling CMake Projects

If the native code you need to build uses CMake as its build system, the

cmake crate provides a build script

helper that invokes CMake and links the resulting library into your Rust binary.

It handles finding CMake, passing the right flags for the target platform, and

telling Cargo where the compiled library lives.

Generating Bindings for C/C++ Libraries

Writing extern "C" declarations by hand is tedious and error-prone.

bindgen automates this by parsing

C/C++ header files and generating the corresponding Rust FFI declarations. It is

typically used inside a build script to regenerate bindings whenever the headers

change. See the Interop chapter for more detail on

working with C and C++ from Rust.

Caching builds

You may find that Rust takes a long time to compile, which is certainly the

case. You can partially mitigate this by using a build cache, which is a service

that will cache the compiled artifacts and allow you to compile considerably

faster. One tool that lets you do this is sccache, which is discussed in

a future chapter.

Toolchain Pinning

If you depend on specific Cargo or Rust features, you may find that you can run into issues if people with older toolchain versions try to build your code. For this reason, it is sometimes useful to pin a specific version of the Rust toolchain in a project, to make sure everyone is using the same versions.

There are two mechanisms that you can use here, depending on where you want this pinning to work:

- You can use a

rust-toolchain.tomlfile to pin the Rust version for the current project. This file is picked up byrustup, which most people use to manage and update their Rust toolchain. When running any Cargo command in a project that has such a file,rustupwill ensure that the specified toolchain version is installed on the system and will only use that. - Conversely, if you are building a library and you want users of your library (as in, people that depend on your library as a dependency, but do not directly work on it) to use a specified minimum Rust toolchain version, you can set the MSRV in the Cargo metadata. This means that users of your library that are on older Rust versions will get an error or a warning when they try to add your library as a dependency.

Pinning the toolchain version for projects

The way you can solve this is by putting a

rust-toolchain.toml file into the repository. This will

instruct rustup to fetch the exact toolchain mentioned in this file whenever

you run any operations in the project.

A minimal rust-toolchain.toml that pins the stable toolchain and ensures

rustfmt and clippy are available looks like this:

[toolchain]

channel = "1.75"

components = ["rustfmt", "clippy"]

Keep in mind that this file is only picked up by people who use rustup to

manage their Rust toolchains. However, rustup is commonly used by Rust

developers to install and update their Rust toolchains, so this works well in

practise.

External tooling is also able to read and use these files. For example, when

using Nix to build Rust projects, the crane module can read this file and use

it to tell Nix which Rust toolchain to pick.

Specifying the minimum toolchain version for library crates

However, this rust-toolchain.toml file is only consulted when you are building

the current project. What if your crate is used as a dependency by other crates?

How can you communicate that it needs a certain version of the Rust compiler?

For this, Cargo has the option of specifying a MSRV for each crate. This is the minimum version of the Rust compiler that the crate will build with.

In a later chapter, we will show you how you can determine the MSRV programmatically and how you can test it to make sure that the version you put there actually works.

If you build library crates, you should specify the minimum version of the Rust toolchain that is needed to build your library. This helps other crate authors by telling them which version of Rust they need to use your library. You should always specify this.

Set the rust-version field in your Cargo.toml:

[package]

name = "my-library"

version = "0.1.0"

edition = "2021"

rust-version = "1.74"

Cargo will warn or error when someone tries to use your library with a toolchain older than this. See the MSRV chapter for how to verify this value is correct.

Common Commands

Cargo has a useful selection of built-in commands for managing Rust projects.

Initializing a Project

To quickly create a Cargo project, you can use cargo new. By default, it will

create a binary crate, but you can use the --lib flag to create a library

crate instead.

cargo new my-crate

Building and running Code and Examples

The main thing you likely use Cargo for is to build and run Rust code. Cargo has

two commands for this, cargo build and cargo run.

cargo build

cargo run

If you have multiple binaries and you want to build or run a specific one, you

can specify it using the --bin flag.

cargo build --bin my_binary

cargo run --bin my_binary

If you instead want to build or run an example, you can specify that using the

--example flag.

cargo build --example my_example

cargo run --example my_example

Running Tests and Benchmarks

Besides building and running Rust code, you will likely also use Cargo to run unit tests and benchmarks. It has built-in commands for this, too.

cargo test

cargo bench

As explained in the Unit testing section, you can

also use the external tool cargo-nextest to run tests faster.

Managing Dependencies

Cargo comes with built-in commands for managing dependencies. Originally, these commands were part of cargo-edit, but due to their popularity the Cargo team has decided to adopt them as first-class citizens and integrate them into Cargo.

cargo add serde

cargo remove serde

Recently, they also added support for Workspace dependencies. If you use

cargo-add to add a dependency to a crate, which already exists in the root

workspace as a dependency, it will do the right thing and add it as a workspace

dependency to your Cargo manifest.

You can also use Cargo to query the dependency tree. This lets you see a list of

all dependencies, and their child dependencies. It lets you find out if you have

duplicate dependencies (with different versions), and when that is the case, why

they get pulled in. For example, if you have one dependency that uses

uuid v1.0.0, but you depend on uuid v0.7.0, then you will end up with two

versions of the uuid crate that are being pulled in.

cargo tree

This command used to be a separate plugin called cargo-tree, but was incorporated into Cargo by the team due to it being useful.

Building Documentation

Cargo can generate API documentation from your doc comments using rustdoc. See

the Documentation chapter for more detail.

cargo doc

Installing Rust Tools

Besides just being a build system for Rust, Cargo also acts as a kind of package manager. Any binary Rust crates that are published on a registry can be compiled and installed using it. This is often used to install Cargo plugins or other supporting tools.

cargo install ripgrep

Compiling from source can be slow.

cargo-binstall is an

alternative that downloads pre-built binaries when available, falling back to

source compilation when not. Many popular tools publish pre-built binaries that

cargo-binstall can find automatically.

Profiling Builds

If you want to understand where Cargo is spending time during builds, you can use the built-in timing report. This generates an HTML page showing which crates took the longest to compile and how they overlapped:

cargo build --timings

Conclusion

If your project can get away with only using it to define and run all of the steps needed to build your project, then you should prefer it over using a third-party build system. Everyone who writes Rust code uses Cargo, it is very simple to use and comes with features that cover the majority of the use-cases you might run into.

If you do have a multi-language project, or a project with complicated build steps, you might soon find that build scripts are rather limited. Dependency tracking is possible with them, but it feels hacky. They are not hermetic, and there is no built-in caching that you can use. In this case, you may find it useful to take a look at the other popular build systems and determine if they might help you achieve what you want in a way that is more robust or more maintainable.

Do keep in mind that usually, using third-party build systems can be more pain than using Cargo itself, because they need to reimplement some functionality that you get for free when using Cargo. However, sometimes there are advantages that they bring that outweigh the additional complexity.

Reading

The Cargo Book by Rust Project

Reference guide for Cargo. This book discusses all features that Cargo has and how they can be used.

Build Scripts by The Cargo Book

Section in the Cargo Book that talks about using build scripts. It shows some examples for how they can be used and explains what can be achieved with them.

The Missing Parts in Cargo (archived) by Weihang Lo

Weihang, a Cargo team member, discusses features that Cargo lacks or that are still in development: pre/post-build hooks, better support for non-Rust code, build script sandboxing, and cross-compilation ergonomics. Useful context for understanding why some projects turn to external build systems.

Foreign Function Interface by The Rustonomicon

This chapter in The Rustonomicon explains how to interact with foreign functions, that is code written in C or C++, in Rust.

Bazel

Bazel is an open-source port of the Blaze build system used internally at Google. It is, in some ways, purpose built to solve the kinds of problems that Google faces: building large amounts of code in a giant monorepo with a very diverse set of client machines.

It excels at mixing and matching multiple programming languages, which makes it a great fit when you’re trying to integrate Rust into an existing C or C++ codebase, or build a web application that uses components written in different languages (such as TypeScript for the frontend, and Rust for the backend) but still want to have a simple build process.

It is also an artifact-centric rather than a task-centric build system.

Why Bazel?

It uses a high-level build language and supports multiple languages and platforms. One of Bazel’s key features is its ability to create reproducible builds, meaning that it ensures the output of a build is the same regardless of the environment it’s run in. This is achieved through strict dependency tracking and sandboxed execution environments. Bazel’s performance is enhanced by its advanced caching and parallel execution strategies, allowing it to only rebuild parts of the project that have changed since the last build, significantly reducing build times. It also scales seamlessly, facilitating its use in both small projects and massive codebases like those at Google. This makes Bazel particularly appealing for large, multi-language projects with complex dependencies, where build speed and consistency are critical.

How does Bazel work?

When you use Bazel, you declare how your project should be built in BUILD

files containing a description in the Starlark language, which is similar to

Python. In this language, you define all of the targets and dependencies. From

this, Bazel builds a graph of all targets and their dependencies.

Bazel will try to perform hygienic builds, meaning that you should not rely on native dependencies being available, but rather you tell Bazel how to build them itself. You can also have platform-specific targets and rules to ensure that your project can be built on any platform (that your developers use or deploy to).

Any external resources you rely on, you specify with a hash-sum to ensure that the compilation process is always deterministic.

Getting Started with Bazel

Bazel’s build configuration replaces or coexists with the typical Cargo metadata. This means that if you want to migrate a Rust project to use Bazel, you may need to duplicate some definitions.

Installing Bazel

While you can install Bazel, the recommended way to use it is to install bazelisk. Bazelisk is to Bazel as Rustup is to Rust: it manages multiple versions of Bazel and ensures that you are using the appropriate version in each project.

If you do use bazelisk, then you should add a file into your repository telling

it which version of Bazel your project should use. The simplest way to achieve

this is by creating a .bazelversion file containing the desired version of

Bazel:

7.3.1

The advantage of doing this is that you ensure all users will use exactly the same version of Bazel.

Project Setup

To use Bazel, you need to configure a Repository (used to be called a

Workspace). You can do this by creating a MODULE.bazel or REPO.bazel file in

the root of your repository.

Typically, if you work with Rust you will want to use [rules_rust][], which is a module that teaches Bazel how to build and interact with Rust projects. A sample Repository configuration might look like this

bazel_dep(name = "rules_rust", version = "0.48.0")

Examples

This sections shows off some example projects that showcase what using Bazel in

a Rust project looks like. Bazel comes with some

Rust examples

and the rules_rust comes with a more extensive

set of examples

that are also worth looking into.

Bazel Rust Hello World

- smallest possible bazel + rust project

Bazel Rust Workspace

- smallest possible bazel + rust workspace project

Mixing Rust and C

- smallest possible rust + native C code project

Full-stack Rust web application

- smallest possible backend + frontend project

Mixing Rust and JavaScript

- smallest possible rust + javascript (react) project

Integrating with Nix

It is possible to integrate Bazel with Nix. The idea is that Nix is a little bit better of a package manager, and that Bazel is a bit better as a build system. Nix is used to bootstrap the environment: the compiler, the native libraries. Bazel is then used as a build system.

If you don’t Nix, to get a true hermetic build environment you need to instruct it to build all native dependencies from source. You can avoid that when using Nix. And the fact that Nix has a public binary cache means that you rarely need to actually compile the thing you are using, most of the case Nix will be able to just pull it from the cache.

- https://nix-bazel.build/

- https://www.tweag.io/blog/2022-12-15-bazel-nix-migration-experience/

- https://www.tweag.io/blog/2018-03-15-bazel-nix/

- https://www.tweag.io/blog/2024-02-29-remote-execution-rules-nixpkgs/

- https://github.com/tweag/rules_nixpkgs

Reading

Scaling Rust builds with Bazel (archived) by Roman Kashitsyn

Roman explains how and why the Internet Computer project switched to using Bazel as it’s build system. He explains how Bazel is good at setting up builds that involve several languages or build targets, such as building some code for WebAssembly and using the resulting binaries as inputs to other builds. He walks you through the process they used to incrementally switch a large project to using Bazel and the implications it had. He considers the migration a success.

Using Bazel with Rust to Build and Deploy an Application (archived) by Enoch Chejieh

Enoch walks you through how to get started with a simple Rust project that uses Bazel to build. In particular, he shows to get get dependencies between several crates working, and unit tests running in Bazel.

Rewriting the Modern Web in Rust (archived) by Kevin King

Kevin shows how to set up a full-stack Rust application using Axum for the backend and Yew and the Tailwind CSS framework for the frontend. He shows how to use the Bazel build system to wrap it all together, including getting interactive rebuilds working. This is a good example to show how powerful Bazel is, as it involves building the frontend to WebAssembly and embedding it into the frontend.

Building Rust Workspace with Bazel (archived) by Ilya Polyakovskiy

Ilya shows you how you can make existing Rust Workspaces build with Bazel, by

taking the ripgrep crate, which is a popular search tool written in Rust and

converting it to use Bazel for building and testing.

Bazel rules_rust by rules_rust project

The rules_rust project is the official Rust bindings for Bazel. It lets you

tell Bazel about the crates you have, and how they depend on each other. If you

want to use Bazel to build Rust code, you should use this plugin.

Bazel: What It Is, How It Works, and Why Developers Need It by David Mavrodiev

This article is an overview of Bazel, it discusses the basics of hot it operates and what advantages it has for developers.

Birth of the Bazel (archived) by Han-Wen Nienhuys

Han-Wen explains how Bazel was born as an open source build system out of Google’s internal Blaze build system, and why the decision was made to open-source it.

Buck2

Buck2 (source) is written and maintained by Facebook, and is very similar to Bazel. What makes it interesting is that it is written in Rust, which makes it rather likely that it has good support for building Rust projects.

Interestingly, Buck2 uses the same language to write configuration as Bazel does, which is called Starlark. Both the syntax and the APIs are quite similar, but not close enough to say that they are compatible. Buck2 is quite new, having only been released in 2022.

What makes Buck2 exciting for us Rustaceans is that it itself is written in

Rust, and that it has good support for Rust out-of-the-box, without needing any

external plugins (as Bazel does with rules_rust).

Why Buck2?

As per their website, Buck2 is an extensible and performant build system written in Rust and designed to make your build experience faster and more efficient.

How does it work?

Examples

There are some examples using reindeer, which is used to translate Cargo dependencies into Buck2 configurations.

Building C/C++ code

Building JavaScript

Building WebAssembly

Reading

Build faster with Buck2: Our open source build system by Chris Hopman and Neil Mitchell

Introduction article of the Buck2 build system. Explains the features Buck2 has.

Buck2 build: Getting started by Buck2 Project

Getting started guide of the Buck2 build system.

Using Buck to build Rust Projects (archived) by Steve Klabnik

Steve explains how Buck2 can be used to build Rust projects.

Using Crates.io with Buck (archived) by Steve Klabnik

Steve shows how crates from [crates.io][] can be used in projects built by Buck2.

Updating Buck (archived) by Steve Klabnik

Steve shows how Buck2 can be updated.

Nix

Nix is a declarative package manager and build system. It lets you define dependencies and configurations in a functional language, and uses build isolation to ensure consistent and reproducible builds across machines.

The declarative nature of Nix makes it great at dealing with complex environments. It handles cross-platform builds correctly. Despite being over 20 years old, it has recently gained a lot of support. It is useful for providing consistent development setups between teams, ensuring that the code has the same environments between developers, CI machines and deployment machines.

Nix is quite versatile. It can be used to configure your system, set up a hygienic development shell containing only the dependencies you explicitly requested, build Docker images with the minimal set of runtime dependencies.

Nix Explainer

There are three main ways you can use Nix:

- Operating system: NixOS, which is built on top of Nix, is an entire operating system that you can use. It allows you to define everything on your work machine with the Nix language.

- Package manager: you can use Nix as a package manager. For example, you can use it to define (in your project) which dependencies it should make available (think libraries, frameworks, compilers). Nix will make these available, and it will make sure that no matter what machine or platform you build on, you always have exactly the same versions of those dependencies. On top of this, you can use a different build system, for example Buck or Bazel. In this configuration, Nix is only responsible for providing the dependencies your project needs to build.

- Build system: using something like Nix Flakes (we will discuss them later), you can define how every part of your project is built. Then Nix will build your project. Depending on what you are building, it can be a bit of work to get it building with Nix. The advantage you have if you do this is that you get reproducible builds, so no matter which machine you build on, you always get exactly the same output. You can also use caching, which makes builds faster for developers.

In this section, we will not take a look at NixOS. Mainly we will focus on using Nix as a build system, but we will also show how you could use it as a package manager in combination with another build system.

Nix Terminology

If you are new to Nix, it can be a bit confusing. Nix is both a language, and a package manager, and a build system. It uses Flakes and derivation. If you already know them, you can skip past the subheadings here, but it makes sense to explain how this all works together.

Derivations

At the very core of Nix is a derivation. This is how Nix tracks how to compile

things. It can take other derivations as input (via nativeBuildInputs,

buildInputs), some files (via src), and it has some shell scripts that

define how it is built, and how it is installed.

Here’s an example derivation (this is just a snippet, and not a full, working Nix config):

pkgs.stdenv.mkDerivation {

src = {

url = "https://github.com/xfbs/passgen/releases/v0.1.2/passgen-v0.1.2.tar.gz";

hash = "sha256-0000000000000000000000000000000000";

};

nativeBuildInputs = [ pkgs.cmake pkgs.ruby pkgs.python3 ];

buildPhase = ''

mkdir build

cd build

cmake ..

make -j

'';

installPhase = ''

make install

'';

};

Derivations are deterministic, which means that if you execute them again at a later date, or on a different machine, they are expected to produce exactly the same output. Nix uses some strategies to make that happen. For example, when your derivation is built, it runs in a sandbox where it only has access to the derivations it declared as inputs, nothing else. When it attempts to get the current time, it receives a timestamp of zero. Network access is blocked. Any external data the derivation uses must have a hashsum, and Nix checks it to make sure the data is still the same.

This allows Nix to use an aggressive caching strategy. It can use the hash of the derivation (this includes the hash of all transitive dependencies) as a key, and the output of it as values.

One of the important problems that Nix addresses here is that even Rust code has

implicit dependencies. For example, your Rust program is linked with some kind

of libc, typically glibc or musl. Which version you have depends on your

distribution, and how frequently you install updates. So if some code works on

your machine, it might not work on someone else’s machine, because you don’t use

the same versions. Similarly, if you use native dependencies like SQLite, it is

possible that you don’t have the same version as your coworker. What Nix ensures

is that, when you do build your code, everyone builds it with exactly the same

versions of all dependencies (compilers, libraries, headers).

Nixpkgs

When you build some code, you typically need a compiler. You might also need

some libraries, and you may want to use some tools (linters, maybe script

interpreters if your build process involves running scripts). Instead of having

to define derivations for each of these, Nix has a centralized repository called

nixpkgs, which contains Nix derivations for most popular packages. In the

derivation earlier, we showed that we used the nativeBuildInputs. The pkgs

that we wrote there refers to nixpkgs.

Nix Shell

Nix Shell is the feature that you can use if you want to use Nix as a package

manager. When you define a Nix Shell, you can tell Nix which dependencies you

need. When you launch it, Nix will open a new shell that has the dependencies

you specified available in its $PATH.

For example, this is what a simple shell might look like. Typically, you will

save this as shell.nix:

{

# todo

}

You can launch the shell with nix-shell. It will recognize the shell.nix

file in your current directory, and create a shell that links the tools you

specified.

You can use this in combination with other build systems. For example, if you use Bazel, then you can use a simple definition that includes Bazel.

Here is an example:

# todo

Note that even if you only use the Nix Shell, you may still want to use Nix

Flakes, for reasons that we will explain later (it has to do with pinning the

version of nixpkgs that you are using).

Nix Flakes

We’ve explained what a derivation is. But how do you write one? Nix has an experimental feature called flakes, which is typically what you want to use. Nix Flakes make it easy for you to specify the version of nixpkgs (that is where all preexisting software is packaged) and import Nix definitions from other repositories.

When you write your Nix derivations to build your code components, you typically want to use existing code. For example, you might want to use a Rust compiler toolchain, the SQLite library, and some tools. Nix has a large repository called nixpkgs which contain Nix definitions for most packages that you would find in other package managers.

But you might also want to import derivations from another source. For example,

you might want to import some Nix code that helps you turn Rust’s build metadata

(your Cargo.toml) into something Nix can understand and build. Or you might

import derivations from another repository that you use.

Nix Flakes allow you to write Nix code that has two definitions: a set of

inputs, which are typically Git repositories. This can be nixpkgs, or

helpers, or other flakes (in which case you can access their exported

derivations). And you can export outputs, which can be packagess

(derivations), apps (which are commands you can run) and definitions for how

to spawn a development shell.

Here is an example for what a derivation looks like:

{

description = "A very basic flake";

inputs = {

nixpkgs.url = "github:nixos/nixpkgs?ref=nixos-unstable";

};

outputs = { self, nixpkgs }: {

packages.x86_64-linux.hello = nixpkgs.legacyPackages.x86_64-linux.hello;

packages.x86_64-linux.default = self.packages.x86_64-linux.hello;

};

}

Even if the syntax might be unfamiliar, you can see two things:

- The flake has a description, which is just an informative string.

- The flake has some inputs, which are specified by URL.

- The flake has some outputs. This is a function that takes the parsed input

flakes as input, and returns some kind of structure. In this example, we

define some keys in the

packagesfield of the output structure. - We have hard-coded only output packages for the